The technique of classifying images involves giving pixels a land cover categorization. Water, urban, forest, agricultural, and grassland are a few examples of classifications.

In remote sensing, there are four primary categories of picture classification methods:

- Unsupervised image classification

- Supervised image classification

- Object-based image analysis

- Deep learning object detection

The two most popular methods for classifying images are supervised and unsupervised.

However, because deep learning and object-based categorization work well with high-resolution data, they have become more popular.

1. Unsupervised Classification

Unsupervised classification is a straightforward yet powerful technique used in remote sensing to group pixels in an image. It works by identifying patterns and similarities among the pixels based on their inherent properties, such as color or reflectance values. These similar pixels are then grouped into clusters, which represent distinct segments of the image.

Once the clusters are formed, the next step is to assign each cluster to a specific land cover class, such as vegetation, water, or urban areas. This step requires interpreting the clusters and matching them with real-world features, often guided by expert knowledge or auxiliary data. It’s a bit like organizing puzzle pieces into groups and then figuring out where they fit in the bigger picture.

One of the main reasons unsupervised classification is so popular is its simplicity. Unlike supervised classification, it doesn’t require pre-labeled samples or training data, making it a quick and easy method for understanding an image. Whether you’re exploring unfamiliar areas or working with limited resources, this approach offers a practical starting point for analyzing remote sensing data. While it may not always provide the precision of more advanced techniques, its ease of use and accessibility make it an invaluable tool for many applications.

The two basic steps for unsupervised classification are:

- Generate clusters

- Assign classes

We start by forming “clusters” using remote sensing software. Typical picture clustering algorithms include the following:

- K-means

- ISODATA

You decide how many groups you wish to create after selecting a clustering algorithm. You can make 8, 20, or 42 clusters, for instance. Pixels within groups are more similar in fewer clusters. However, the heterogeneity within groups increases with the number of clusters.

To be clear, these clusters are not classified. Each cluster will then need to have its land cover classes manually assigned. For instance, you can choose the clusters that best depict vegetation and non-vegetation if you choose to categorize them.

2. Supervised Classification

Supervised classification is a more controlled and targeted approach to image analysis in remote sensing. It begins by selecting representative samples, known as “training sites,” for each land cover class you’re interested in, such as water bodies, forests, or urban areas. These training sites act as a reference, helping the software recognize similar patterns throughout the entire image.

The process involves three basic steps. First, you carefully identify and select training areas that accurately represent each land cover class. Next, the software analyzes these samples and generates a “signature file,” which is essentially a set of unique characteristics for each class. Finally, using this signature file, the software applies the classification to the entire image, assigning each pixel to the most appropriate land cover category.

Supervised classification is ideal when you have prior knowledge of the area and specific categories in mind. While it requires more input and effort upfront compared to unsupervised classification, it often yields more precise and reliable results. This makes it a go-to technique for detailed land use studies, environmental monitoring, and resource management.

For supervised image classification, you first create training samples. For example, you mark urban areas by marking them in the image. Then, you would continue adding training sites representative in the entire image

You keep producing training samples for every land cover class until you have representative samples for every class. This would then produce a signature file that contains the spectral data from all training samples.The final step would be to perform a classification using the signature file. You would then need to choose a classification algorithm, like this:

- Maximum likelihood

- Minimum-distance

- Principal components

- Support vector machine (SVM)

- Iso cluster

3. Object-Based Image Analysis (OBIA)

Pixels are used for both supervised and unsupervised categorization. To put it another way, it produces square pixels, each of which has a class. However, pixels are grouped into representative vector shapes with size and geometry in object-based image classification.

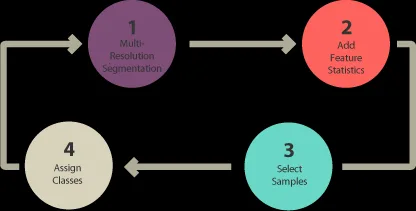

To execute object-based image analysis classification, follow these steps:

- Execute segmentation at multiple resolutions.

- Choose training locations.

- Explain statistics.

- Sort/classify

Pixels are grouped in object-based image analysis (OBIA) to segment an image. It doesn’t produce individual pixels. Rather, it produces objects with various geometries. The correct image can make objects so important that they do the digitization for you. Buildings are highlighted in the segmentation results below, for instance.

The two segmentation algorithms that are most frequently used are:

- Segmentation at multiple resolutions in eCognition

- ArcGIS Pro‘s segment mean shift tool

There are various techniques for classifying objects in Object-Based Image Analysis (OBIA). As an illustration, you can use:

SHAPE: A shape statistic like “rectangular fit” can be used to categorize structures. This evaluates the geometry of an object in relation to a rectangle.

TEXTURE: An object’s homogeneity is its texture. Water, for instance, is primarily homogenous because to its dark blue color. However, forests are a mixture of green and black and feature shadows.

SPECTRAL: You can use the average of spectral characteristics like red, green, blue, short-wave infrared, and near-infrared.

GEOGRAPHIC CONTEXT: Neighbors of an object have distance and proximity relationships.

NEAREST NEIGHBOR CLASSIFICATION: Supervised classification and nearest neighbor (NN) classification are comparable. The user selects sample locations for every land cover class following multi-resolution segmentation. They then define statistics for object classification in images. Lastly, the nearest neighbor identifies objects according to the stated statistics and how similar they are to the training locations.

4.Deep learning object detection

The rise of deep learning in object detection has been transformative, particularly in the field of remote sensing. Neural networks, with their ability to learn from massive datasets, have unlocked new levels of accuracy and efficiency in identifying objects in satellite and aerial images. From detecting trees in dense forests to pinpointing buildings in sprawling urban landscapes, these models have proven to be incredibly powerful.

One of the key strengths of deep learning lies in its capacity to learn complex patterns and features directly from the data. Unlike traditional methods, which often require manual feature extraction, deep learning models automatically identify the most relevant characteristics for object detection. This makes them highly adaptable to different types of images and objects, even in challenging conditions such as low lighting or heavy cloud cover.

With advancements in neural network architectures, such as convolutional neural networks (CNNs) and transformers, deep learning has become the backbone of many remote sensing applications. These tools not only improve accuracy but also enable faster processing of vast amounts of imagery, paving the way for innovations in environmental monitoring, disaster response, and urban planning. The potential for growth in this area is truly enormous, as new datasets and models continue to expand the boundaries of what’s possible.

you can find models for object detection:

- Car/ship detection

- Tree segmentation

- Land fiber classification

- Agricultural field delineation

- Building footprints detection

- Wildfire delineation

InSightful

nice explanation